|

In this paper, we investigate to which degree recent research on tools for model-based development of embedded systems meets the requirements for replicability of experimental results. Therefore, both the tool and the experimental subject data, essentially the models used in the experiments, should be made available following the so-called FAIR principles-Findability, Accessibility, Interoperability, and Reusability -aiming at the replicability of experimental results. In turn, experimental results should be replicable Footnote 1 in order to increase the validity and reliability of the outcomes observed in an experiment . In addition to theoretical and conceptual foundations, some form of evidence is required concerning the effectiveness of these tools, which typically demands for an experimental evaluation . Research on model-based development often reports on novel methods and techniques for model management and processing which are typically embodied in a tool.

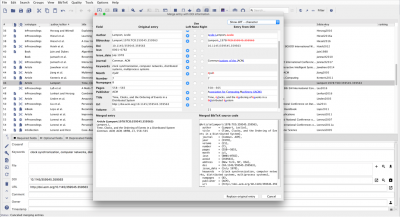

It promotes the use of models in all stages of development as a central means for abstraction and starting point for automation, e.g., for the sake of simulation, analysis or software production, with the ultimate goal of increasing productivity and quality.Ĭonsequently, model-based development strongly depends on good tool support to fully realize its manifold promises . Model-based development is a much appraised and promising methodology to tackle the complexity of modern software-intensive systems, notably for embedded systems in various domains such as transportation, telecommunications, or industrial automation . While we are convinced that this situation can only be improved as a community effort, this paper is meant to serve as starting point for discussion, based on the lessons learnt from our study. Given that tools are still being listed among the major obstacles of a more widespread adoption of model-based principles in practice, we see this as an alarming signal. We found none of the experimental results presented in these papers to be fully replicable, and 6% partially replicable. Given that both artifacts are needed for a replication study, only 9% of the tool evaluations presented in the examined papers can be classified to be replicable in principle. In a nutshell, we found that only 31% of the tools and 22% of the models used as experimental subjects are accessible. Our results from studying 65 research papers obtained through a systematic literature search are rather unsatisfactory. We investigate to which degree recent research reporting on novel methods, techniques, or algorithms supporting model-based development with MATLAB/Simulink meets the requirements for replicability of experimental results. Following principles of good scientific practice, both the tool and the models used in the experiments should be made available along with a paper, aiming at the replicability of experimental results.

This is typically achieved by experimental evaluations.

Research on novel tools for model-based development differs from a mere engineering task by not only developing a new tool, but by providing some form of evidence that it is effective.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed